Hey,homosexual animal sex videos at least Microsoft's news-curating artificial intelligence doesn't have an ego. That much was made clear today after the company's news app highlighted Microsoft's most recent racist failure.

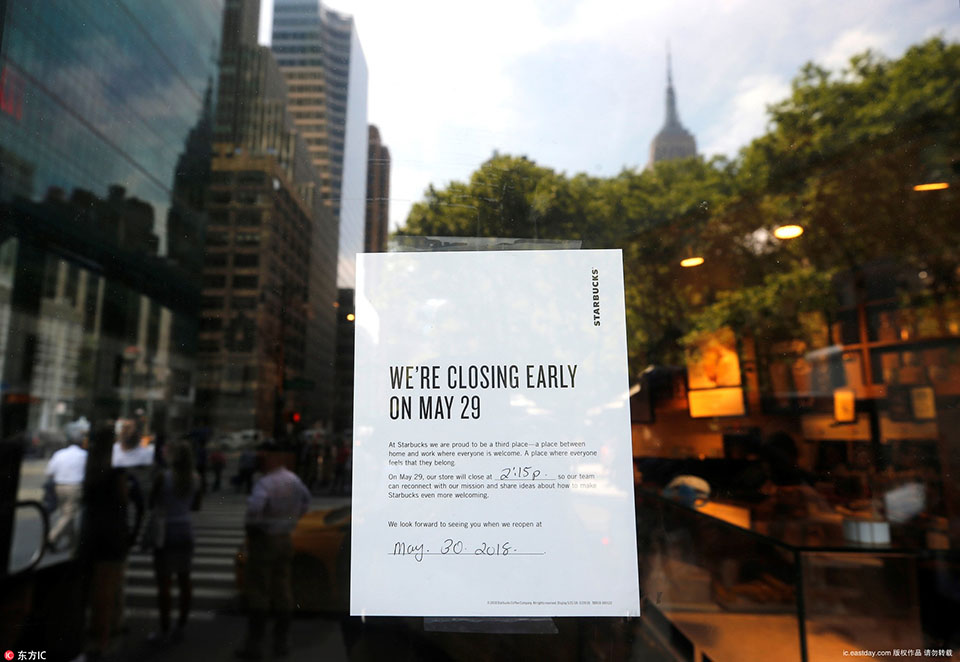

The inciting incident for this entire debacle appears to be Microsoft's late May decision to fire some human editors and journalists responsible for MSN.com and have its AI curate and aggregate stories for the site instead. Following that move, The Guardianreported earlier today that Microsoft's AI confused two members of the pop band Little Mix, who both happen to be women of color, in a republished story originally reported by The Independent. Then, after being called out by band member Jade Thirlwall for the screwup, the AI then published stories about its own failing.

So, to recap: Microsoft's AI made a racist error while aggregating another outlet's reporting, got called out for doing so, and then elevated the coverage of its own outing. Notably, this is after Microsoft's human employees were reportedly told to manually remove stories about the Little Mix incident from MSN.com.

Still with me?

"This shit happens to @leighannepinnock and I ALL THE TIME that it's become a running joke," Thirlwall reportedly wrote in an Instagram story, which is no longer visible on her account, about the incident. "It offends me that you couldn't differentiate the two women of colour out of four members of a group … DO BETTER!"

As of the time of this writing, a quick search on the Microsoft News app shows at least one such story remains.

A story from T-Break Tech covering the AI's failings as it appears on the Microsoft News app. Credit: screenshot / microsoft news app

A story from T-Break Tech covering the AI's failings as it appears on the Microsoft News app. Credit: screenshot / microsoft news app Notably, Guardian editor Jim Waterson spotted several more examples before they were apparently pulled.

"Microsoft's artificial intelligence news app is now swamped with stories selected by the news robot about the news robot backfiring," he wrote on Twitter.

This Tweet is currently unavailable. It might be loading or has been removed.

We reached out to Microsoft in an attempt to determine just what, exactly, the hell is going on over there. According to a company spokesperson, the problem is not one of AI gone wrong. No, of course not. It's not like machine learning has a long history of bias (oh, wait). Instead, the spokesperson insisted, the issue was simply that Microsoft's AI selected the wrong photo for the initial article in question.

"In testing a new feature to select an alternate image, rather than defaulting to the first photo, a different image on the page of the original article was paired with the headline of the piece," wrote the spokesperson in an email. "This made it erroneously appear as though the headline was a caption for the picture. As soon as we became aware of this issue, we immediately took action to resolve it, replaced the incorrect image and turned off this new feature."

Unfortunately, the spokesperson did not respond to our question about humanMicrosoft employees deleting coverage of the initial AI error from Microsoft's news platforms.

Microsoft has a troubled recent history when it comes to artificial intelligence and race. In 2016, the company released a social media chatbot dubbed Tay. In under a day, the chatbot began publishing racist statements. The company subsequently pulled Tay offline, attempted to release an updated version, and then had to pull it offline again.

As evidenced today by the ongoing debacle with its own news-curating AI, Microsoft still has some work to do — both in the artificial intelligence and not-being-racistdepartments.

Topics Artificial Intelligence Microsoft Racial Justice

Best gift for kids deal: National Geographic Rock Tumbler Kit on sale for $19 off at Amazon

Best gift for kids deal: National Geographic Rock Tumbler Kit on sale for $19 off at Amazon

Miss Michigan brilliantly calls attention to the Flint water crisis

Miss Michigan brilliantly calls attention to the Flint water crisis

Trump insider who wrote anonymous op

Trump insider who wrote anonymous op

Ryan Reynolds' Twitter tribute to Burt Reynolds is as perfect as you'd expect

Ryan Reynolds' Twitter tribute to Burt Reynolds is as perfect as you'd expect

U.S. satellites reveal China's solar dominance

U.S. satellites reveal China's solar dominance

Vet sends owner the most adorable photo of very good dog after his surgery

Vet sends owner the most adorable photo of very good dog after his surgery

World Surf League announces equal prize money for men and women

World Surf League announces equal prize money for men and women

Dog jealous of other dog's ear medicine is the most wholesome thing we've seen

Dog jealous of other dog's ear medicine is the most wholesome thing we've seen

Fighting snakes fall through bedroom ceiling, and that's enough internet for today

Fighting snakes fall through bedroom ceiling, and that's enough internet for today

Nilo высказался после перехода в Heroic

Nilo высказался после перехода в Heroic

Donald Trump used a noticeably out

Donald Trump used a noticeably out

I turned off push notifications, and my introverted self has never felt better

I turned off push notifications, and my introverted self has never felt better

Was Vanilla Ice on that quarantined plane? An investigation.

Was Vanilla Ice on that quarantined plane? An investigation.

Качественный косплей на Muerta из Dota 2

Качественный косплей на Muerta из Dota 2

Ross Kemp filming himself while watching soccer is more entertaining than the actual game

Ross Kemp filming himself while watching soccer is more entertaining than the actual game

This Jeff Goldblum shower curtain will take your bathroom to the next level

This Jeff Goldblum shower curtain will take your bathroom to the next level

Dear Fitbit: How to stop me, a loyal user, switching to a new Apple Watch

Dear Fitbit: How to stop me, a loyal user, switching to a new Apple Watch

Bill Nye is only taking selfies with climate

Bill Nye is only taking selfies with climate

Ryan Reynolds' Twitter tribute to Burt Reynolds is as perfect as you'd expect

Ryan Reynolds' Twitter tribute to Burt Reynolds is as perfect as you'd expect

Open letter seeks pause on AI experiments: What it says, who signed it'Yellowjackets' and 'The Last of Us' share 3 creepy details, cannibalism asideVirgin Orbit will fire 85 percent of its employeesWordle today: Here's the answer, hints for March 30Twitter will only show verified accounts on its 'For You' page'Yellowjackets' Season 2 episode 1 features the single greatest Jeff moment so farTwitter now lets businesses handle their employees' blue ticks, for a hefty price'Yellowjackets' fact check: A chef weighs in on the wilderness feastHalf of Twitter Blue subscribers have less than 1,000 followers'The Lost King' review: Sally Hawkins and Stephen Frears bring whimsy to royal romp You have to be extremely online to understand the threat America faces now Racism thrives in the online dating world Robinhood's very bad Super Bowl ad made some people real mad Antifa.com now redirects to the White House’s website. This doesn’t mean anything. Meet Twitter's breakout star of the 2021 Puppy Bowl, Chunky Monkey When is the next Prime Day? Burger King had a really bad tweet for International Women's Day Andrew Yang, NYC mayoral candidate, doesn't know what a bodega is Gab's failed attempt at cleverness becomes the most hilarious self Hate groups are moving to encrypted online platforms, making them harder to track

0.3024s , 14433.109375 kb

Copyright © 2025 Powered by 【homosexual animal sex videos】Enter to watch online.Microsoft's AI makes racist error and then publishes stories about it,